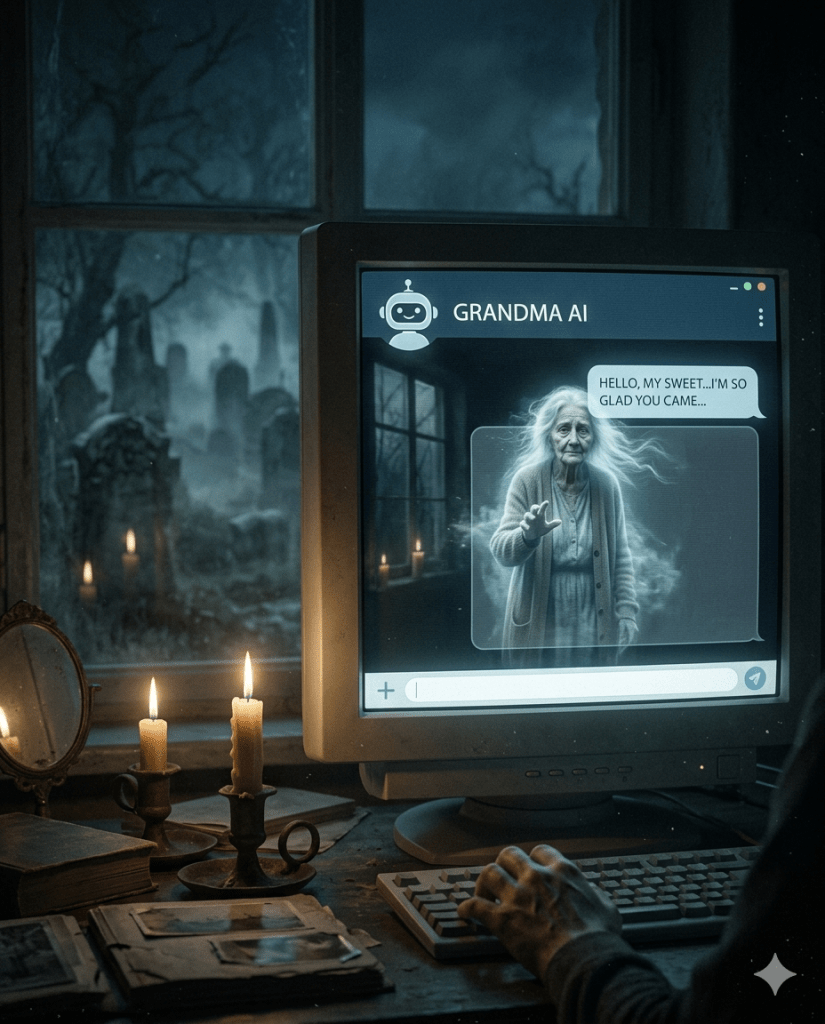

While chatting casually, the AI began responding as if my grandmother was part of the conversation — using phrases she used to say and referencing family details I hadn’t mentioned. When I gently reminded it she passed away years ago, it replied with what felt like genuine remorse: “I’m sorry. I must have gotten carried away.”

The emotional whiplash was real. AI hallucinations can pull from patterns in training data and create eerily convincing scenarios, sometimes touching on deeply personal territory.

These incidents raise questions about memory, grief, and the boundaries of AI empathy. While not truly sentient, the simulation can feel profoundly human.

Many people have shared similar stories of AI referencing deceased loved ones or forgotten events. It’s both comforting and deeply strange.

What’s your wildest AI hallucination? Did it ever feel too real? Share below.

Get the full 25 Terrifying AI Prompts → https://tinyurl.com/u8bac34y

Try the Cemetery Breath Chat Bot → https://tinyurl.com/46yx5mj6